All your AI engineering tools in one place.

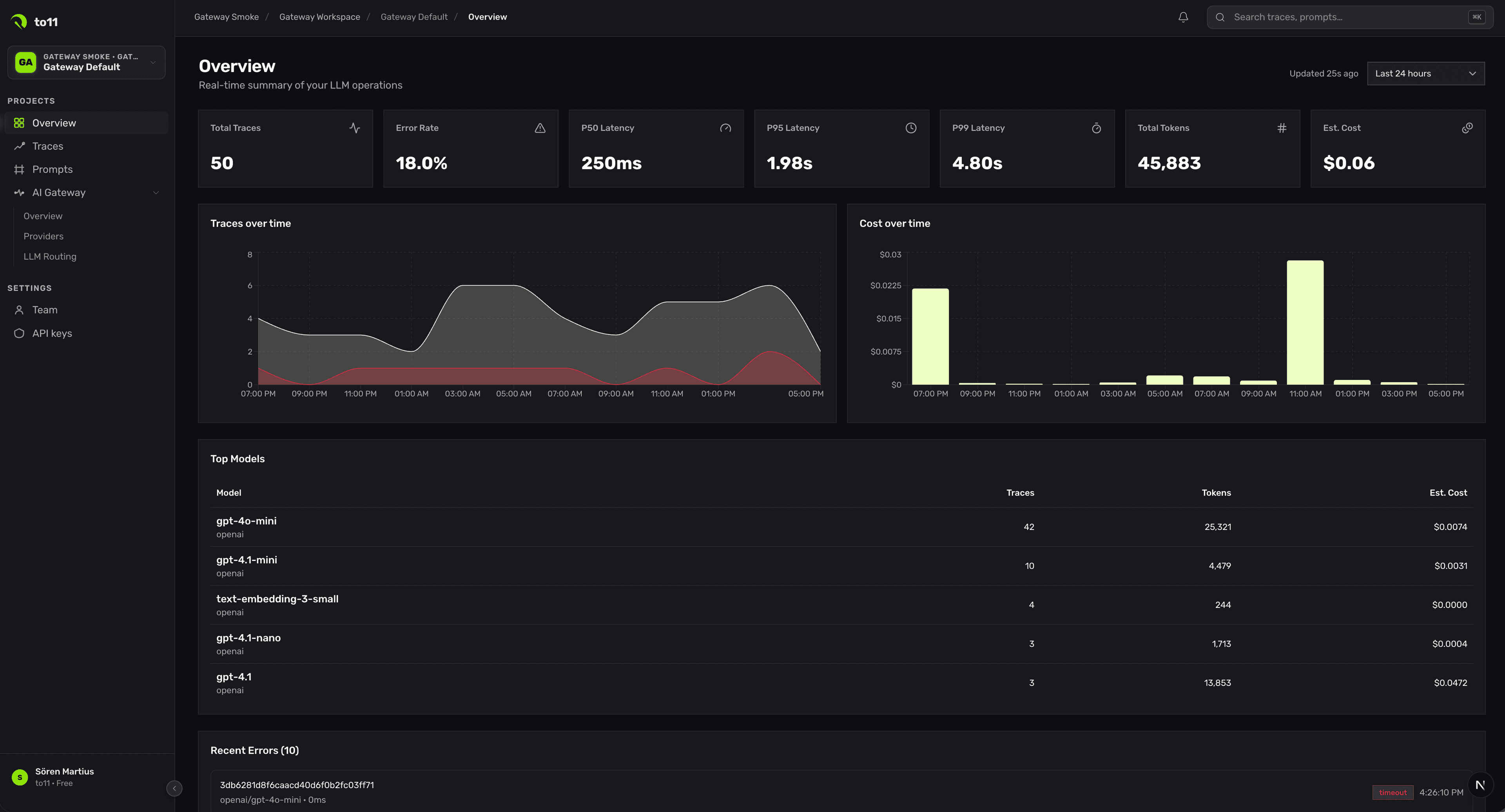

Gateway, observability, prompt management, evals, and guardrails on one integrated platform — so your team can ship faster, debug smarter, and spend less on tooling.

Trusted by teams building the future of AI.

From prototype to production, autonomously.

Fastest time-to-value

Ultra-fast AI gateway performance & OTel-native observability meet integrated prompt management. Simple to set up, easy to integrate, yet powerful.

Most AI stacks are a mess of disconnected tools.

Teams stitch together separate products for gateway routing, observability, prompt iteration, evaluations, and safety. That means duplicated instrumentation, broken debugging workflows, inconsistent data, and too many vendors to manage. to11 replaces that fragmented stack with one production system.

One request flow.

One source of truth.

Every AI request through to11 becomes the foundation for routing, tracing, evaluation, prompt iteration, and policy enforcement. Your team gets one integrated workflow for improving quality, reliability, safety, and cost in production.

Everything you need to run AI in production.

Route requests across every model, without rewrites.

One OpenAI-compatible endpoint. Failover, cost-aware routing, provider abstraction, A/B, and availability control — all policy-driven.

- Model routing

- Fallbacks & retries

- Provider abstraction

- Cost optimization

- Availability control

Every span. Every retry. Every token.

OTel-native tracing for prompts, tool calls, embeddings, retries, and downstream services. View in-app or pipe to Datadog, Honeycomb, Grafana.

- End-to-end traces

- Agent visibility

- Latency & error analysis

- Production debugging

- OTel-native schema

Versioned prompts. In your deploy flow.

A git-native catalog with environments, diffs, rollback, and reproducibility. No more copy-paste from a Notion doc.

- Versioning

- History & rollback

- Experimentation

- Collaboration

- Reproducibility

Evals that keep up with the model.

LLM-as-judge, programmatic checks, and golden datasets wired into CI. Fail a PR when quality regresses. Evaluate online traffic continuously.

- Regression detection

- Offline + online evals

- Quality scoring

- Release confidence

- Continuous improvement

Safety without a latency tax.

PII redaction, policy enforcement, prompt-injection and jailbreak detection — at the gateway, inline, sub-5ms.

- Policy enforcement

- PII & secret detection

- Safety checks

- Risk monitoring

- Compliance support

Built around the

production feedback loop.

to11 is not a bucket of features. It's a system for continuously improving AI in production — one loop that every request goes through.

What teams get with to11.

From internal copilots to production agents.

“to11 gave us one place to see prompts, failures, latency, and routing decisions instead of piecing everything together ourselves.— Lead infra engineer, Northstar

“We replaced multiple disconnected workflows with one platform and got a much faster feedback loop for improving quality.— Staff AI engineer, Helio

“Having gateway, observability, and prompt iteration tied together made debugging production issues dramatically easier.— CTO, Acre

One minute.

Two lines of code.

to11 is a drop-in replacement for the OpenAI client. Point your base URL at our gateway — every request now routes, traces, evaluates, and protects automatically.

from openai import OpenAI

client = OpenAI(base_url="https://api.to11.ai/v1", api_key=T011_KEY)

# that's it. every request now routes, traces, evals & guards.

res = client.chat.completions.create(model="claude-sonnet-4", messages=[...])Frequently asked questions.

Run AI in production without stitching together five tools.

Use one integrated platform to route, trace, evaluate, and protect every AI request. Start with the workflow you need today, then expand as your AI stack grows.